Creating usable products that are compelling to users require a lot of work. Usability testing is a key method for ensuring the success of a new and existing product release.

Methods and Tools:

• Moderated Usability Testing

MY ROLE:

From 2018-2019 I worked with a window manufacturing company as a research and design consultant to better understand their digital tools through the perspective of their customers. My goal for this specific project was to usability test a tool for that was about to be published to their main website, as well as a “conceptual” tool that needed a more unique approach to test the usefulness of the tool to the preferred audiences. I ran two rounds of testing, wrote research scripts, recorded and transcribed session notes, and translated data into actionable insights for the design and product team.

THE PROBLEM:

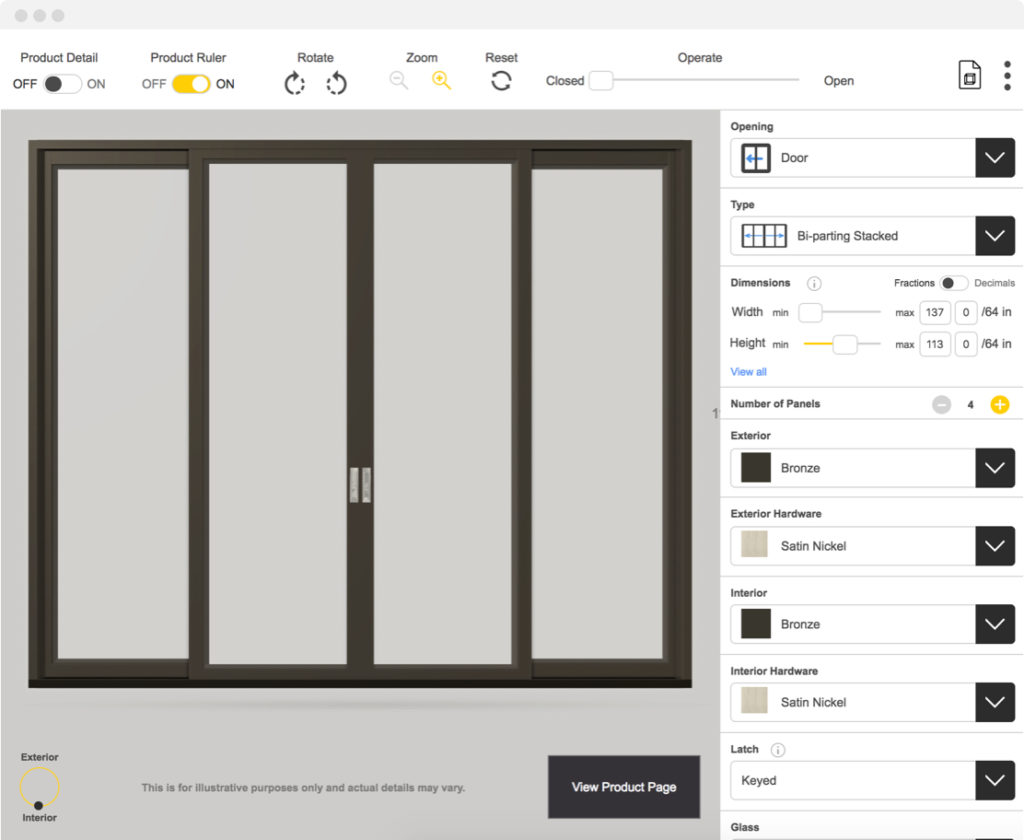

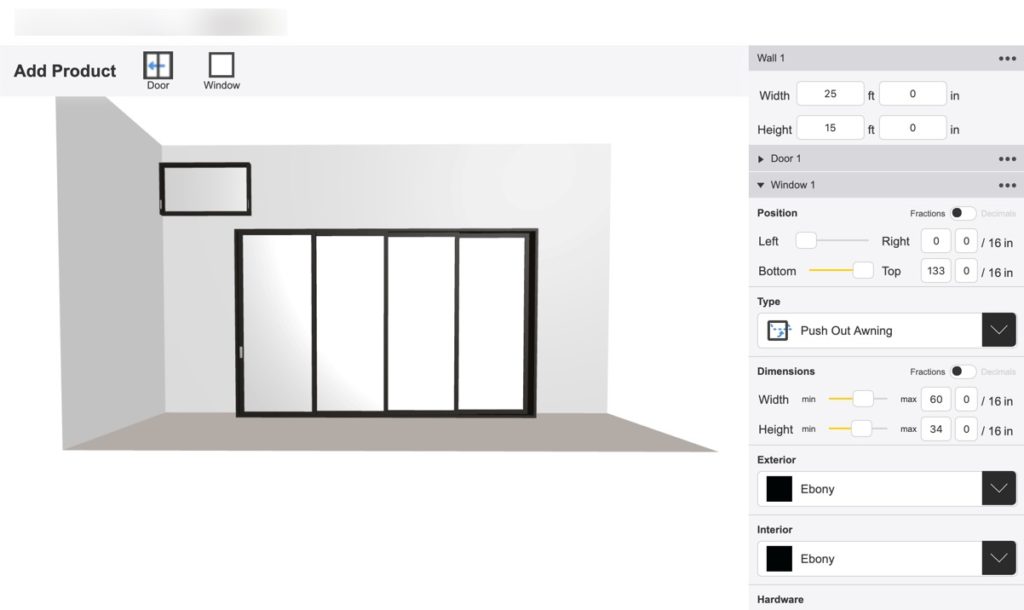

The client came to me with two different live prototypes that enabled end users to create their own visualization of their window and door products. This tool’s goal was to help the end user explore options that their newest product line could offer and contextualize color, size, operation, and product details when making a decision on which product to use for their project. The issue they had with the tools was that they were made in silos, and without data as to the specific UI cues and elements needed for successful use or the actual reaction a user would have to the ability to create their own product.

Having this issue in mind, their main “build a window” product launch was coming soon and we needed to identify and alleviate many usability issues as soon as possible. Then, when we had more time to explore, I proposed conducting the second round as a hybrid interview/concept testing/usability testing session to better understand the user needs of each product and how to create better products with the user in mind using specific actionable parameters.

MY PROCESS:

ROUND 1: USABILITY TESTING

The main goal of the first round of testing was to run a formal usability test with the three major user types: contractors, homeowners, and architects. I scheduled and coordinated sessions with these users, created scripts and note taking sheets, transcribed notes, and gave occasional insight to the product team throughout the lifecycle of the testing sessions to tackle any quick issues I saw during the sessions that would make or break the product. I also translated the findings into a formal document with actionable recommendations.

Each session was an hour long and was conducted over the phone remotely. I worked closely with another researcher to conduct these tests and create a “source of truth” for the main UI concerns we had when conducting the test via a collaborative note document.

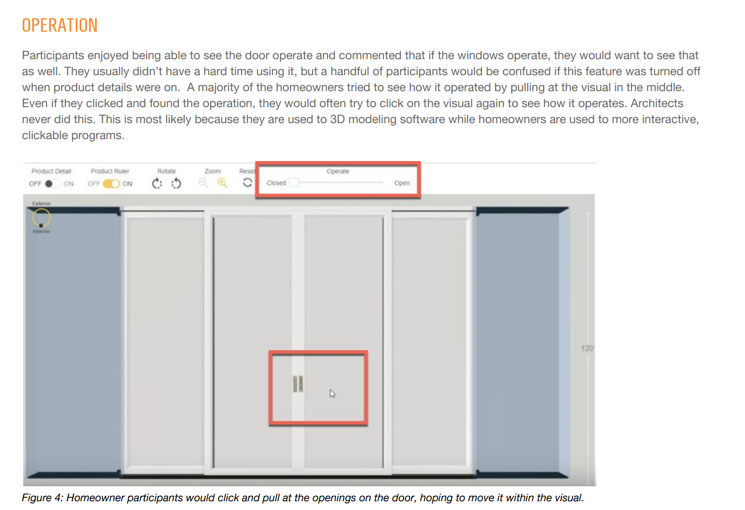

During testing, it was clear that users were excited to jump into the tool and try it out. They started clicking around and moving elements around even before they were given a task. But, as we dug deeper, we started to uncover UI cues that prevented them from accomplishing their goals, frustrate, or confuse them as their excitement started to dim.

An example of this was the dropdown design options on the right had labels that confused users. The tips and education for these labels were hidden underneath a button on the other end of the UI, so users ended up not being able to learn how to use specific design elements and feel as empowered as the client hoped they’d be.

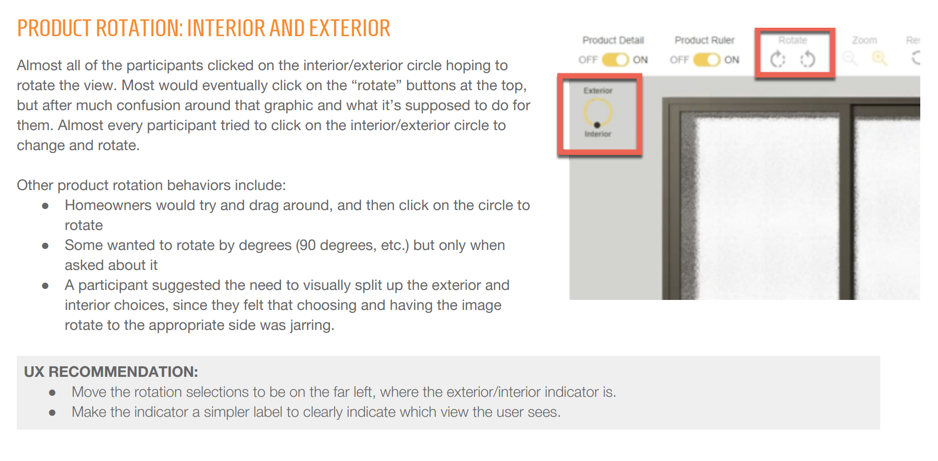

After testing was completed, I completed an affinity diagramming exercise to translate and group my findings into actionable categories that can be prioritized and fixed before the product launch. In the report, I utilized visual indicators in my research findings document to showcase the issues users had.

ROUND 2: CONCEPT/USABILITY TESTING

With the second round, we wanted to re-approach the visualization tool by evaluating another tool they created. This tool was one that allowed the end user to build a wall within a house and customize the size and dimension and “place” a pre-made product within the view. Less exploratory research was done with this product and identifying only the usability issues wouldn’t be as useful to the team, so we combined methods and completed concept testing with usability testing. This helped us come up with a holistic recommendation for the product and allow the team to know the strategy and next steps needed to make a more compelling user experience.

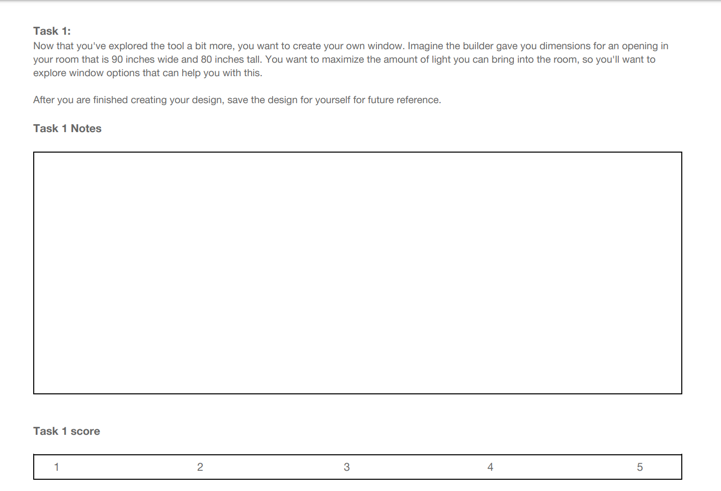

Concept testing is a great tool to elicit response from a user on their first impressions of a tool, their emotions associated with specific UI cues, and their satisfaction with the interface elements. I pulled responses from users on their first impression of the designed tool, what they think the tool does, and documented what words and reactions users had the most.

I completed the same amount of testing sessions as the first round. I wrote the script, transcribed the sessions, and shared out key insights to various teams at the client’s request.

The goal was to have a consensus from the team on what to do next, so the analysis and storytelling of the data was key. Creating a typical usability findings document wasn’t the best approach for their needs, so I had to tell a unique story combining the findings from the two halves of each session.

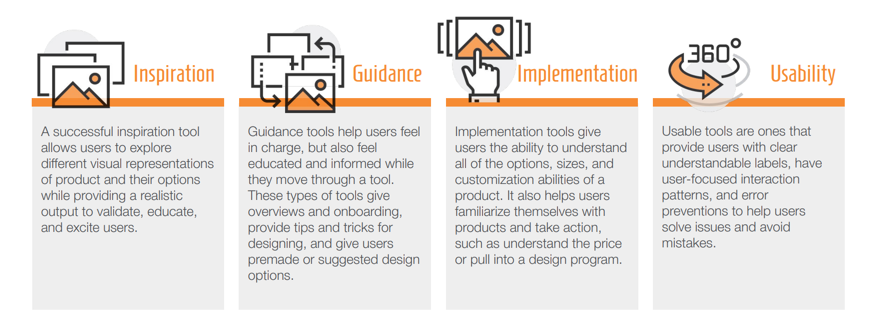

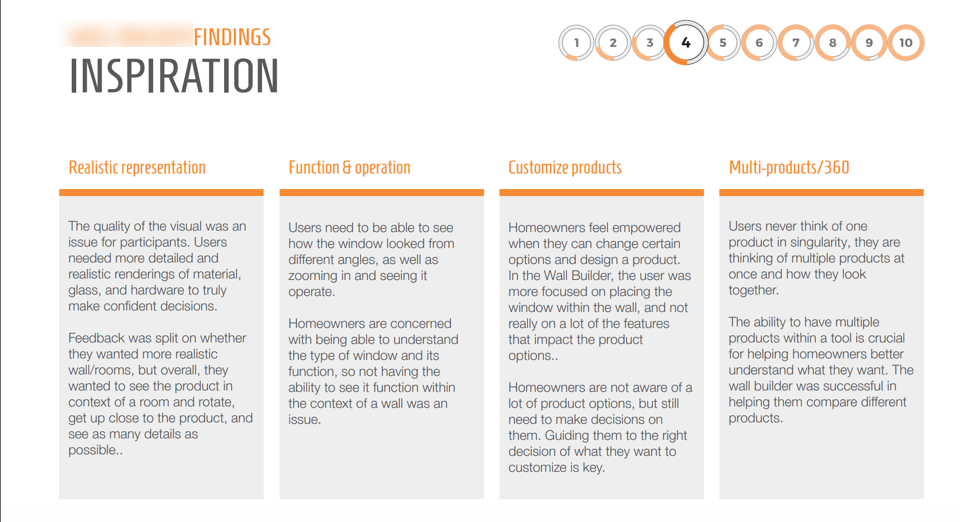

When analyzing the data, I created four parameters to represent the core user needs, which were “inspiration, implementation, education, and usability”. Each session was assigned a number of “success” based on feedback from the user and my observation of their interaction with the tool. Then throughout the presentation, I broke down each parameter and the success of the two tools with next steps included.

OUTCOME:

The first visualization tool that launched has received a lot of engagement and continues to make UI enhancements based on Google Analytics data and the initial feature enhancement list we provided the team. The team values the research done and the story presented to the product teams and continues to share it across the company to show the importance of testing and user feedback.